Design Document: You’re Doing Great

“You’re Doing Great” by Hulda Zheng, Jason Chen, Alex Morgan, Jerry Vogel

Story: What’s your story? How does it address the theme “The Toronto that is Not” that Karen challenged you with?

Our story outlines the viewer as he/she walks through a seemingly normal Toronto. As the story progresses, the viewer quickly realizes that something about this Toronto seems to be off. Fellow passerbys seem unaware of what the viewer is seeing, and even seem suspicious of the viewer himself/herself. Our story interprets the theme in an internal manner. The viewer experiences a version of Toronto that is based on a malfunctioning perception. It’s not the “real” Toronto…or is it?

Audience: Who is your audience?

“You’re Doing Great” is for anyone who is curious about 360° video presented in an avant-garde narrative style. This can be shown to people who are interested in virtual reality storytelling and trying something a bit abnormal. 360° video and VR are up and coming industries, and our project aims to demonstrate how the immersive effect of these mediums can affect the way a story is conveyed. Our audience is anyone who wants to see Toronto from a different perspective or wants to experience 360° video and VR.

Takeaway: What do you expect your audience will take away from this experience?

We expect the user to get a sense of the simple things that can be done with 360° video and how it can be used to explore surroundings or the feeling of being a ghost (people looking at you, but no interactivity) in a world that isn’t quite how it is in reality. In some sense, this is a visual representation of a dérive that puts people into our shoes in first person point of view (like a more immersive psychogeographical map). Ultimately, we just want our audience to be engaged with the story, and we thought first person point of view would add an interesting element to how one takes in the experience.

Experience Map

| Stimulus (Objective) | Response (Subjective) | |||||

| See | Hear | Touch | Smell | Taste | Emotional State | |

| Attraction | The user sees an ad for the video online or on a poster in the city. | The user hears someone, possibly a friend, talk about the video and recommends it. | The user touches their electronic device, most likely cell phone, to load up the video | The user will smell whatever happens to be in their surroundings at the time | N/A | Curious as to what the video is all about |

| Entry | The video loads up onto their device and begins playing. | The user hears the audio that begins to play once the video begins playing | The user feels the headset they are wearing, or whatever device they are using if not on a headset | The user will continue to smell whatever is in their surroundings | N/A | User is curious about watching “Toronto happen” around them |

| Engagement | The user sees a lot of wild transitions and some potentially disorienting movements | The user will hear all the audio the video has to offer, as well as all the audio glitches that present within the video | The user feels the headset or cardboard. They also may feel some disorientation from the video moving while their body doesn’t | The user will smell their surroundings, also maybe some sweat if the user is sweating at the time | N/A | The user will feel engaged and interested as the view the video and all its quirks and abnormalities. They will feel completely immersed in the video |

| Exit | The video is stopped and exited once it is finished playing. | The final bits of audio are being played as the video comes to an end. | The user will still feel the headset or cardboard as he/she removes it after video ends. They also feel the cool air if they were sweating in the headset. | The user smells fresh(er) air as they remove the headset | N/A | The user will feel satisfaction and enjoyment with the video they just watched |

| Extension | The video will continue to be advertised online and around the city | The user will recommend the video to others who haven’t watched it, and continue the cycle | The user may feel the headset again if they decide to watch it again to find the little edits we made in the video. | N/A | N/A | The user will feel a sense of longing and will want to watch the video again.The user may feel a little bit of déjà vu if they decide to

walk through places covered in the video |

Why VR? What unique affordances does 360° video and/or VR offer that makes possible the story you are trying to tell?

VR and 360° video adds an enhanced level of immersiveness that is essential for our project to be viewed in the best way possible.

It allows user to observe their surroundings and explore the environment we filmed in. It also opens up the option of using unique transitions into entirely new environments, like the ones we did (one moment you’re in a park, the next you’re in a record store). In this sense, we are bringing The Matrix closer to reality. It allows users to explore several different spots in Toronto in a short period of time, much shorter than if they went out to the real city to explore. As stated before, this is like a more immersive form of the psychogeographical map made from our dérives.

Process Documentation: Provide evidence of your research and design process. Describe your original concept and how it changed through iteration. Discuss what you learned through the ideation/design, iteration of your ideation/design, production, post-production, and describe the tools you chose to use and why you chose to use them and what you learned along the way (e.g. Fader vs. Unity)

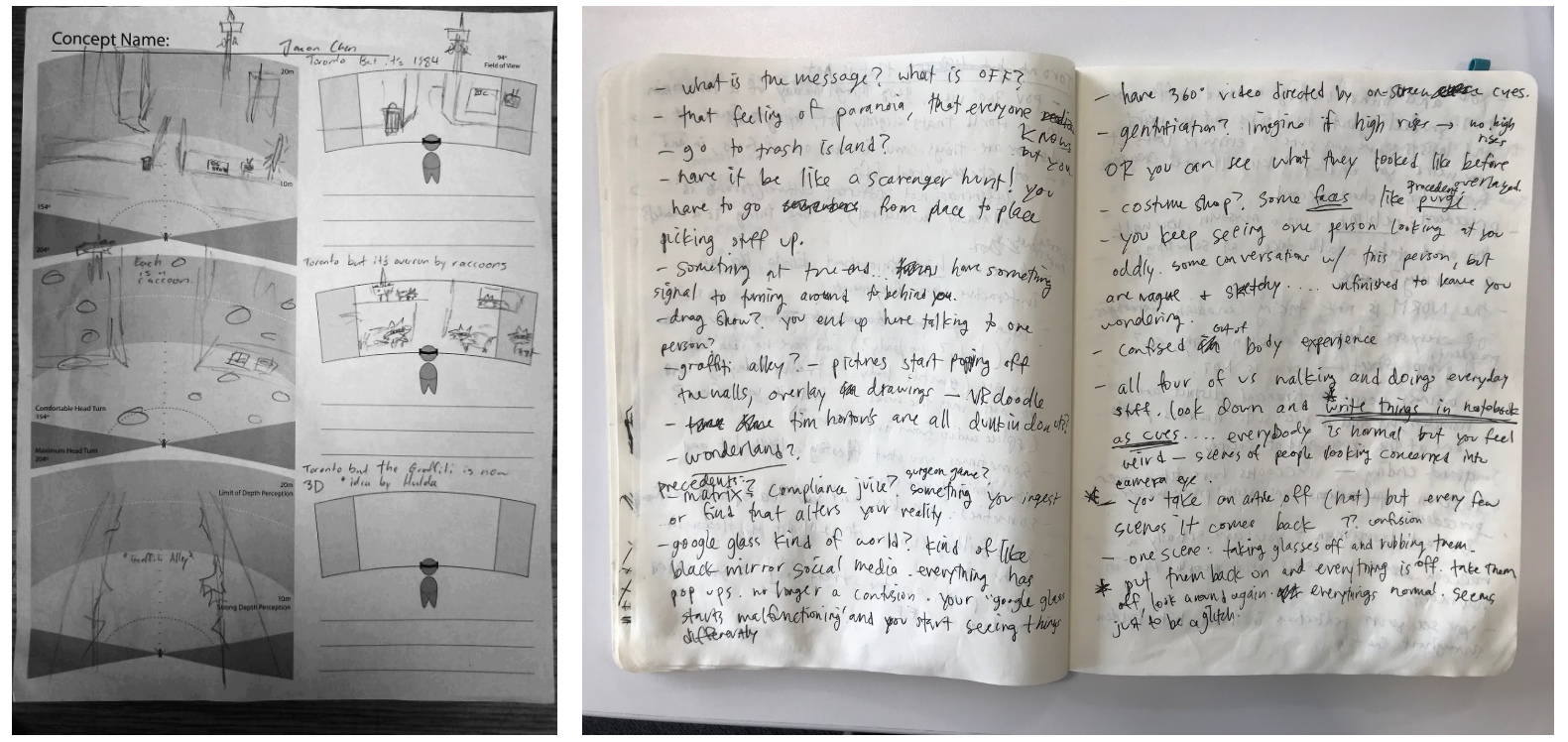

To begin, we came up with a few broad ideas in the format “Toronto, but….” and sketched a single scene of them on a 360° video storyboard. Our ideation process went from small to big. We didn’t have a big idea or an overarching story, but we had lots of ideas for clips that might work well in a 360 video display. We jotted down all these ideas, along with questions that we needed to answer before finalizing an idea, ranging over several pages of notes.

After some discussion, our original concept was to have a similar viewing of the city, but instead of the glitches and abnormalities that are present throughout our current project, at the end it is revealed that the viewer was actually a raccoon. It was intended to be a humorous take on storytelling. Since racoons are so prevalent in downtown Toronto, we thought our idea would poke fun at such an integral part of Toronto culture, but we quickly ran into several problems. The biggest problem we ran into was that in order to truly get the perspective of a racoon, we would have to film at a raccoon’s height. This led to two subsequent problems: the lower height would immediately give away the fact the user is a small animal, which would ultimately take away from the final reveal at the end, and we did not have a means of effectively filming at a raccoon’s height. Those problems ultimately led us to change our idea from the raccoon to our current project.

We took a risk with our project in focusing more on the story than on the technical creative things you can do in Fader and Unity. In virtual reality, it can be uncomfortable and nauseating for the user if the visuals move too quickly or are too jarring. Knowing this, we decided to actually embrace this feeling and see if we could work it to our advantage to add to the story.

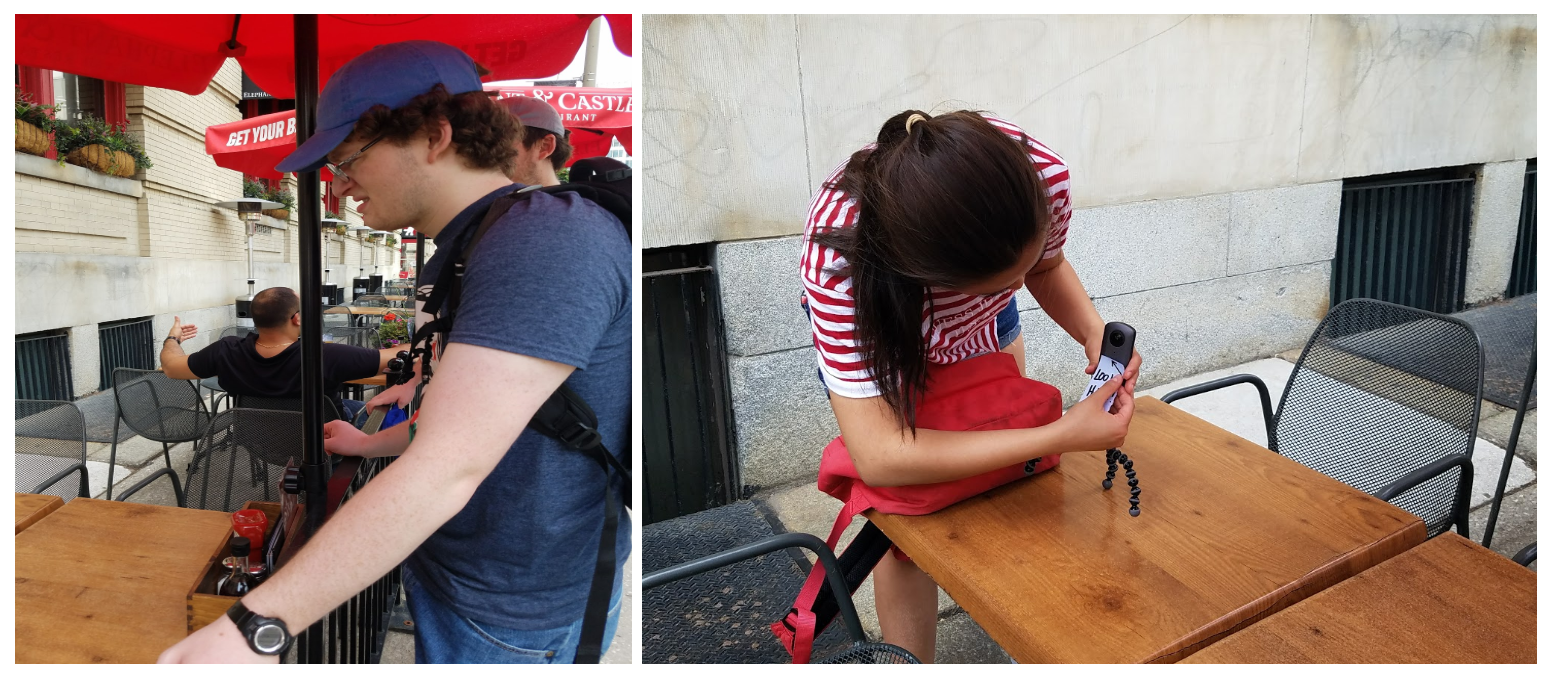

After iterating our original idea and coming up with the idea we wanted, we began filming the various clips we used in our final video. As we started filming, it became apparent that walking with a “little head massager” (GorrillaPod) with an attached camera on your head would garner plenty of odd looks from people passing by. All of them looked at Hulda, oddly enough. We decided to try and use this to our advantage by putting a little sign on the camera that said “Look here!” pointing up at the lens. This way, people’s weird looks could play into our story. It would seem as if everyone was noticing the viewer and looking weird at them, adding to the oddity of the day. It also adds more interaction to the space. By having the people video look the viewer in the “eye”, it seems as if the viewer is being addressed and is an actual active member of the society rather than a fly on the wall.

We started our filming on King Street and gradually moved throughout the surrounding area between King Street and Queen Street, where we filmed at several different locations, such as the Sun Life Financial Building on King Street, the Osgoode Hall Gardens on Queen Street, and Kops Records, which is also on Queen Street. After filming all the clips we needed for the video, we stitched all the necessary clips, and began our post-production work.

During filming, there was an unfortunate incident in which the camera fell off Hulda’s head mid-scene at the record shop. Thankfully, the Ricoh is safe and sound AND we actually decided to use this footage to add to the glitchiness of the Toronto we created. It wasn’t in our original plan, obviously, but it had an big impact on the shaping of the story narrative. After making sure the camera was alright, we went to David to let him know about the incident. As he was tinkering with the edges, he accidentally hit ‘record’ and we ended up with a short clip of this tinkering. We decided to work this accidental clip into our story as well. The ending scene of our story plays on both of these accidents. In the record shop, we further edited the falling camera to make it even more glitchy, and added a few frames of the David recording to make it seem like the viewer has fallen and there is a malfunction in the matrix. The scene cuts to black and David appears to be tinkering with the viewer in an attempt to “fix” him/her and quickly the scene rewinds. The next scene, the viewer seems to be reliving the scene in the record shop all over again, as if David had rewinded the viewer’s perception and fixed the problem.

A recurring symbol in our project is the hat. In the first chapter at the Osgoode Hall Park, a stranger comes up to the viewer and says that she found his/her hat. Essentially, this hat acts like a marker that triggers the rest of the glitches in this Toronto. Things go awry in every location after the viewer is handed the hat. We also took the opportunity to use putting the hat on as a transition between scenes. At the end, the record shop employee hands the user the same hat in a bag instead of the Drake record the user intended to purchase.

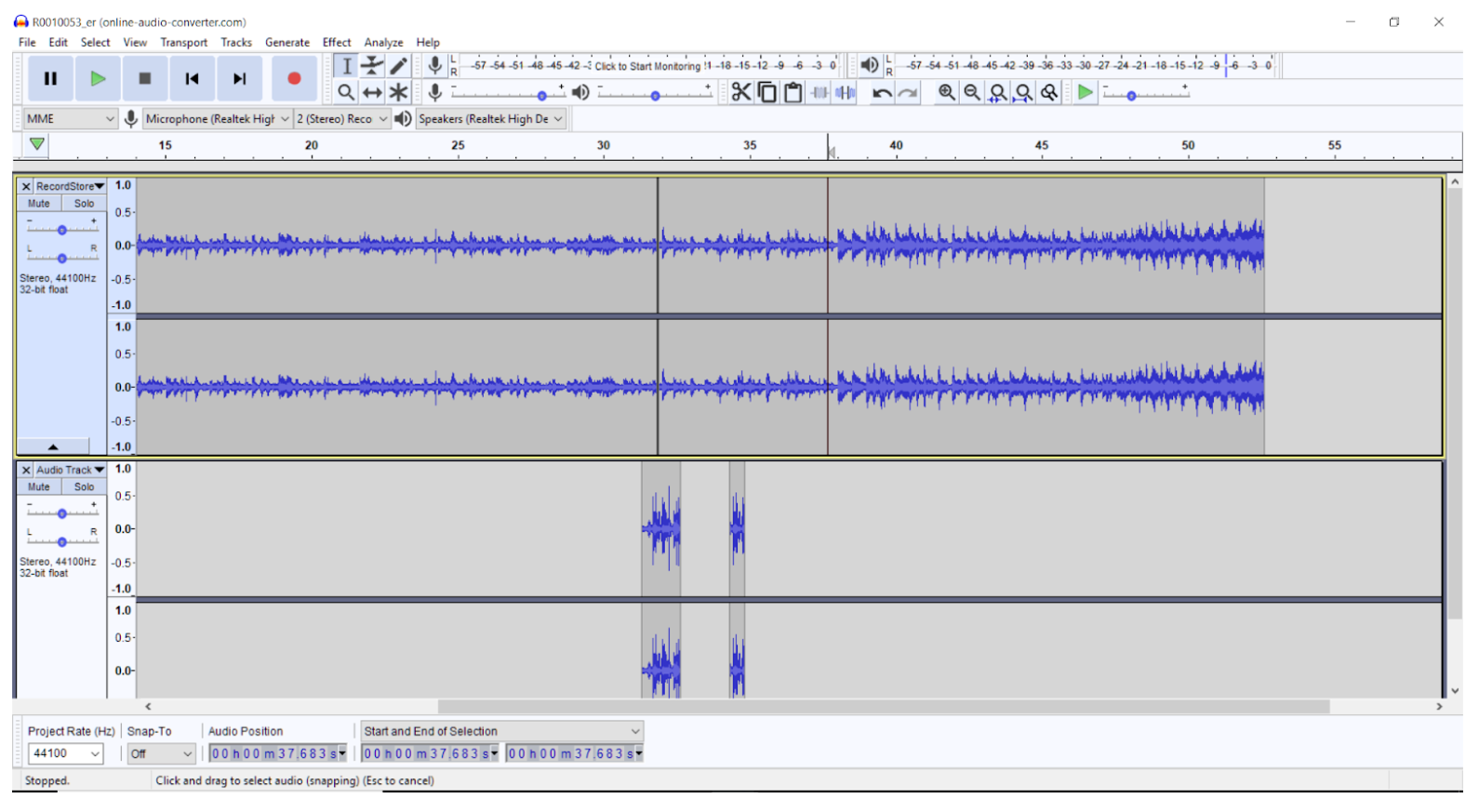

In terms of the audio, we wanted a glitchy and bizarre audio sequence. Thus, we went into Audacity and messed around. Echos, distortions, making our own static, and deleting/reordering sequences were just some of what we did. In order to fulfill the full glitch experience, making a cohesive, yet seemingly broken audio track is imperative. The audio must make enough sense to understand what is happening in the experience, but must be distorted and processed enough to still confuse or disorient slightly. Thus, our process was as follows. We watched the video and cherry picked which parts would be glitched, then proceeded to use Audacity to distort and change the audio file.

In terms of the glitchy video effects, some were applied to the entire scene. Meanwhile, others were made in After Effects by tracking and animated masks to localize the glitchiness to select objects, namely the CN tower.

![]()

Big picture vision. A prototype is just a starting point. Imagine you had more time and resources to continue working on the project, where would you like to take it?

The biggest thing we could have been able to do if we had more time would be to add more scenes. We had a lot of different scenes planned out for our project. But we had to cut out a lot of them due to time constraints. If we had more time, we would have liked to film, stitch, and implement several more scenes. Some of these scenes include a scene at Graffiti Alley, where the viewer would look around at all the graffiti, and then proceed to turn around only to find all the graffiti they had just saw vanished, a scene where the viewer pets a dog, and the dog attempts to bark, but instead of barking the dog meows like a cat, and a scene where you order ice cream at an ice cream shop, but instead of getting ice cream you get a completely random food, such as lasagna.

Another thing we would have liked to add to our video is a little human interaction. Our current video is just a video that has no human interaction whatsoever. Adding even a little interaction, such as viewing markers to interact with the environment, would be really beneficial to our overall video. Adding this interaction would make the experience more immersive, and would complement the video very well by amplifying the various special effects we have.

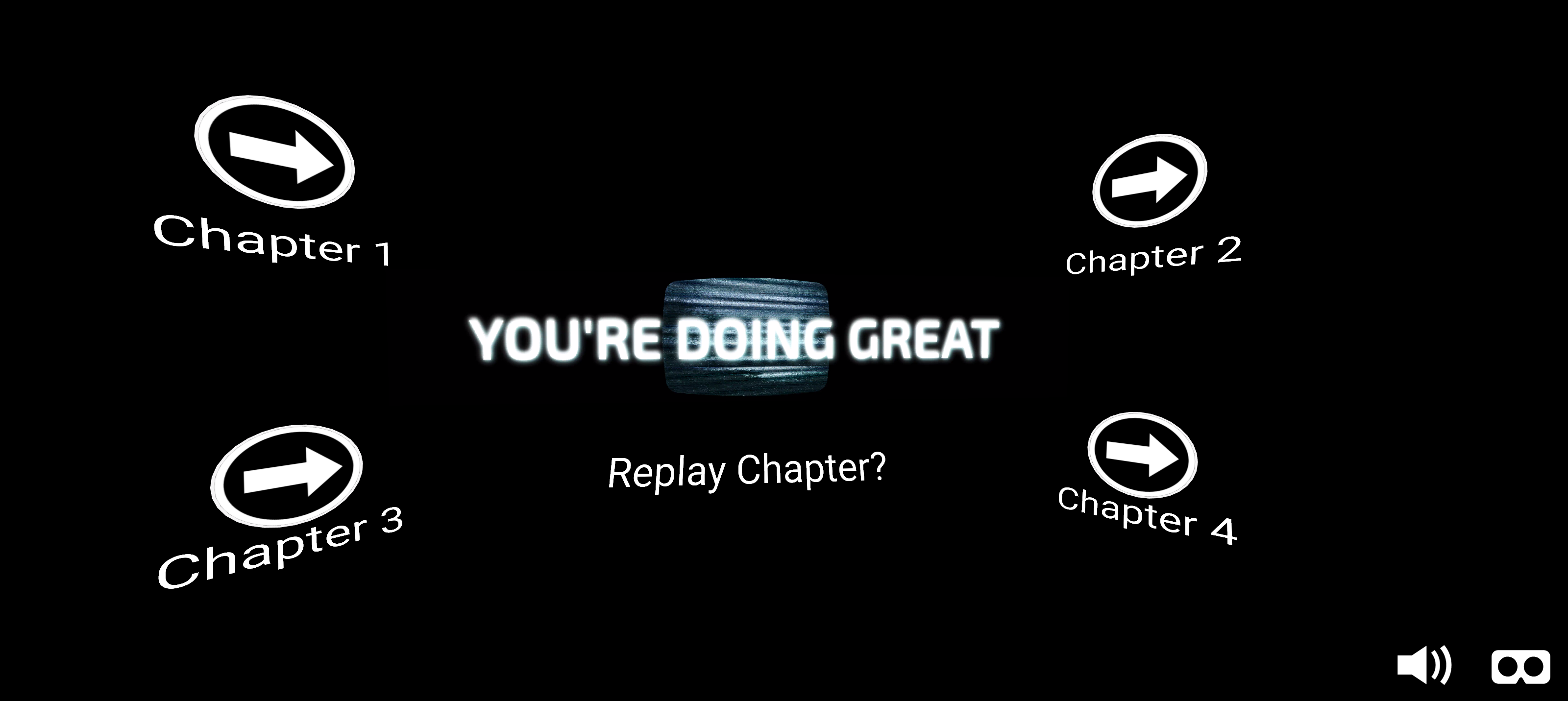

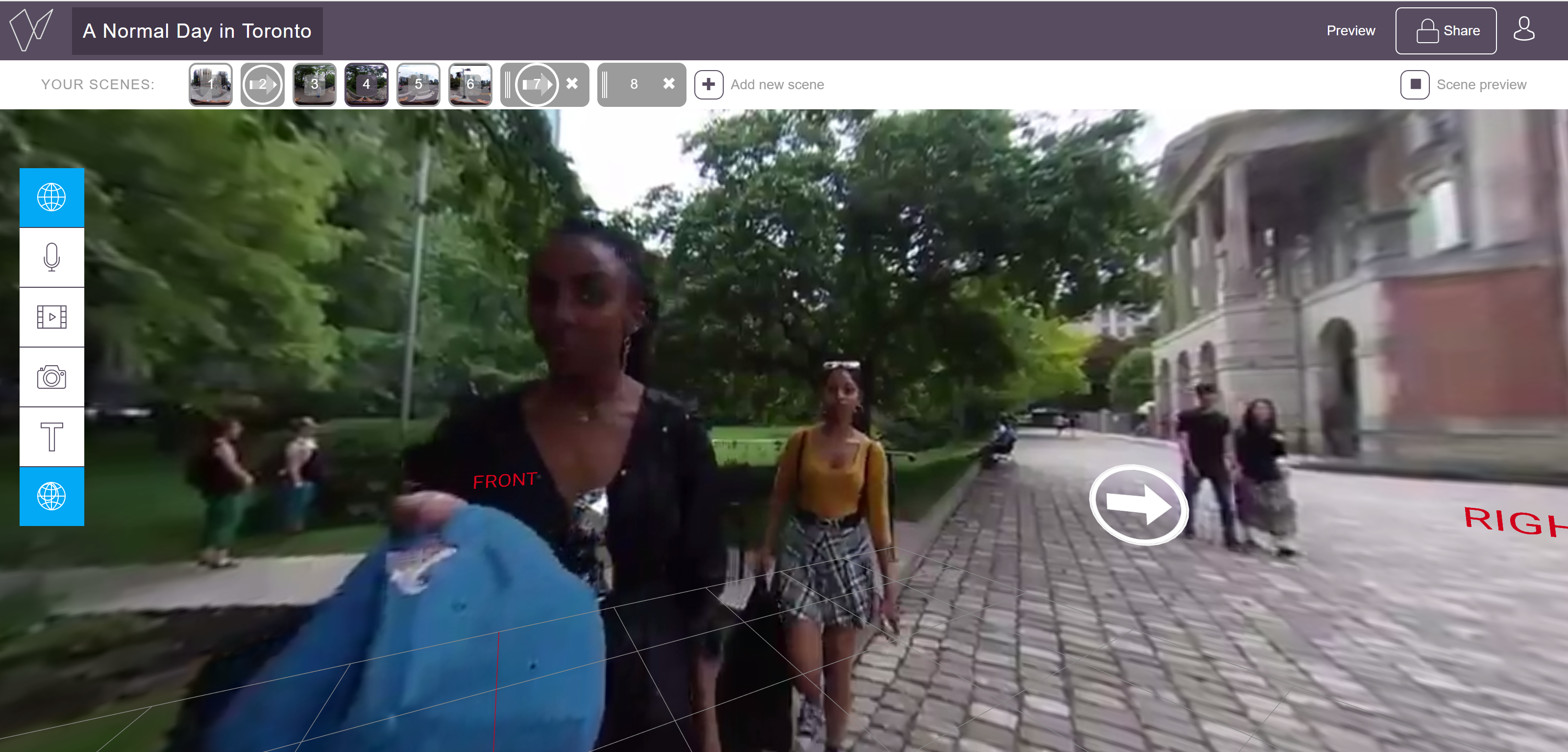

Fader implementation (test):

Because of the limitations of Fader (such as 300MB videos and mandatory screen fades), we separated our video into 4 “chapters”.

Chapter 1 – Osgoode Hall Park

Chapter 2 – King Street

Chapter 3 – HotBlack Coffee

Chapter 4 – Kops Records

These then had to separated once again into cuts to fit the 300MB limit. Then, interactive markers were placed to continue the story at the pace the user wanted. Each chapter started with a title, but nothing else. The markers were strategically placed to be near the point of interest, so, users would be finding the next marker and also see the action happening.

An additional problem faced in this involved Premiere not rendering the video past around 1:25 (min:sec), which means cuts had to be done on a separate system. We got past this by using a different program to cut video.

After that, we added our working title screen at the end with options to jump to each chapter (which had their own dedicated screens).

For our Fader Test: https://app.getfader.com/projects/a2976862-508a-4a9f-bf83-a6d5416a748e/publish

This was then sent over to the HTC Vive for user testing.

The first aspect of the experience that I noted was how nauseating the experience was. We were expecting some disorientation, but not to that degree. Also, we noticed how the icons staying on screen detracted from the immersion. It would have been nice if the icons only appeared at the end of a video (or near the end). Also, the act of gazing on the icon was a bit long (it would be better if the scenes switched faster).

From this test, we moved some icons around so they were the same distance away and we adjusted the size of the icons a little bit to minimize distraction from the scene.

Future Activity:

For future implementation, we could re-shoot everything and leave only a few scenes moving to make the experience less nauseating, but still jarring. Whether or not this is done, we should use a system other than Fader to bring the interactivity. Best case would be learning Unity in depth, but even Eevo would allow more freedom and better design.

Another option stems from a-way-to-go from the NFB: we’ll still have motion, but it will be in more like stop motion and controlled by the user on our linear path. This will be more like jump cuts rather than a smooth video, which, thought watching “older media” (TV, movies, etc) is possibly more natural and less disorienting. Leaving our video as something to be watched on a flat screen may also be an option.

Either way, the most important edit we would make is negating motion sickness to make the experience more enjoyable.

Further Notes:

We’d Like to thank the people of Toronto for helping us with some scenes in this project!

Recent Comments