Augmented Cognition is about enhancing human information-processing capabilities through the design of adaptive interfaces via physical, psychological, and cognitive state estimation.

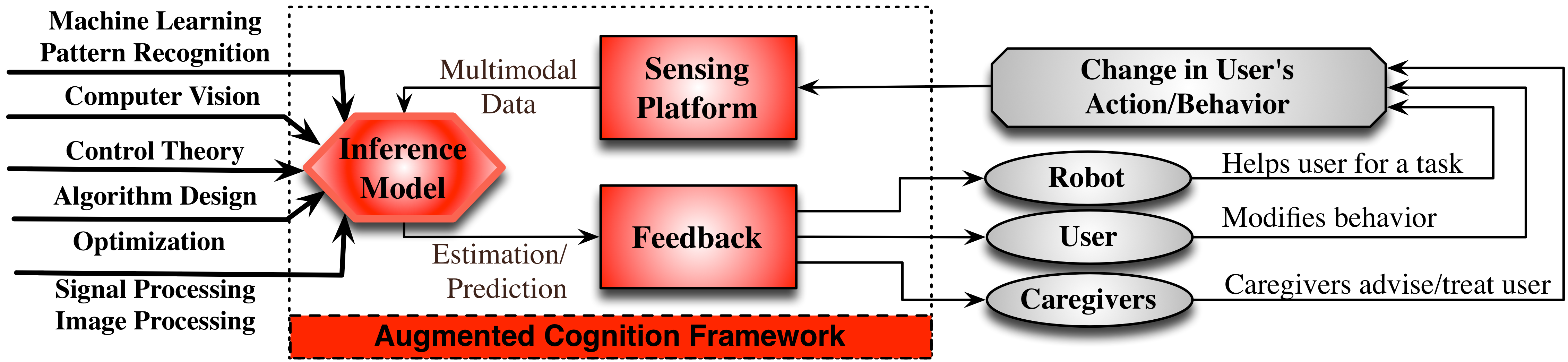

Augmented Cognition Lab (ACLab) research centers on creating augmented cognition systems to enhance human cognition rather than replace it. The augmented cognition systems have three primary components: (1) the sensing element, (2) the analytic element, and (3) the feedback element, as shown in figure above. The sensing element gathers and fuses multimodal data from the human and the environment including 4D color-depth videos, neurophysiological signals, and audio/speech information. Robust analytics is the cornerstone of the system. At ACLab, we use both machine learning models and biomechanically and biologically inspired structural models. When possible, a structural model is preferred because it can incorporate existing knowledge and research into the model without requiring a large training set to gain such knowledge. In addition, structural models tend to be more transparent and easier to analyze for failure modes and edge cases. Careful design of the feedback element is also critical because unless the feedback is useful, timely, and understandable, the system will be unusable regardless of the quality of the other two components.

We are actively collaborating with physicians, psychologists, and therapists to define problems and fine-tune augmented cognition solutions for the healthcare providers, infants/children with Autism spectrum disorder, individuals with limited speech and physical abilities, individual with sensory impairments, and the elderly. Even apparently simple problems in these domains have a complex web of interconnected elements with significant engineering and science implications. Therefore, at ACLab, we extensively work at the intersection of computer vision and machine learning with multidisciplinary elements from behavioral sciences. In summary, two main lines of research in ACLab are:

- Digital Prosthetics: Replacing lost sensing and data processing functionality

- Intelligence Amplification: Enhancing sensing and data processing functionality

For many of these projects, augmented reality (AR) and virtual reality (VR) tools are essential for both the assessment and enhancement portions of the project. Below, you can find some of our active research projects. Also visit our The Signal Processing, Imaging, Reasoning, and Learning (SPIRAL) Group for more information about our collaborative cluster.

ACLab Funded Projects:

Sony Faculty Innovative Award ($100K)

“Live Stream Temporally Embedded 3D Human Body Pose and Shape Estimation” (Role: PI, Date: 10/2023-9/2024)

“PFI-RP: Use of Augmented Reality and Electroencephalography for Visual Unilateral Neglect Detection, Assessment and Rehabilitation in Stroke Patients” (Role: CoPI, Date: 12/2023-11/2026)

NSF-DARE 2327066 ($150K)

“Collaborative Research: Development of a precision closed loop BCI for socially fearful teens with depression and anxiety” (Role: PI, Date: 11/2023-10/2026)

NSF-CAREER 2143882 ($600K)

“CAREER: Learning Visual Representations of Motor Function in Infants as Prodromal Signs for Autism” (Role: PI, Date: 5/2022-4/2027)

Northeastern-UMaine Seed Funding ($50K)

“Using artificial intelligence to examine the interplay between pacifier use and sudden infant death syndrome” (Role: CoPI, Date: 11/2020-10/2023)

NSF-IIS 2005957 ($190K)

“CHS: Small: Collaborative Research: A Graph-Based Data Fusion Framework Towards Guiding A Hybrid Brain-Computer Interface” (Role: PI, Date: 10/2020-09/2023)

Northeastern Tier 1 Award ($50K)

“Novel methods to quantify the affective impact of virtual reality for motor skill learning” (Role: CoPI, Date: 07/2020-09/2021 )

Northeastern GapFund360 ($50K)

“A-Eye: A Nanotechnology and AI-assisted Artificial Cone Cell Capable of Color and Spectral Recognition” (Role: CoPI, Date: 01/2020-12/2020)

ADVANCE Mutual Mentoring Grant ($3K)

“Translating the ‘Mastermind’ Concept from Business to Academia: Facilitating Peer mentorship among female PIs leading active research labs” [Project Link] (Role: CoI, Date: 01/2020-12/2020)

NSF-NRI 1944964 ($102K)

“NRI: EAGER: Teaching Aerial Robots to Perch Like a Bat via AI-Guided Design and Control” (Role: PI, Date: 10/2019-09/2020)

NSF-IIS 1915065 ($395K)

“SCH: INT: Collaborative Research: Detection, Assessment and Rehabilitation of Stroke-Induced Visual Neglect Using Augmented Reality (AR) and Electroencephalography (EEG)” [Project Link] (Role: PI, Date: 09/2019-08/2023 )

NSF-NCS 1835309 ($999K)

“NCS-FO: Leveraging Deep Probabilistic Models to Understand the Neural Bases of Subjective Experience” ( Role: CoPI, Date: 08/2018-07/2021 )

Amazon AWS ($30K)

“Synthetic Data Augmentation for Deep Learning” [Project Link] (Role: PI, Date: 07/2018-09/2020)

Biogen ($20K)

“Low-Cost Kinematic Measures for In-Clinic Assessments of Neurodegenerative Diseases” (Role: PI, Date: 05/2019-12/2019)

NSF-IIS 1755695 ($169K)

“CRII: SCH: Semi-Supervised Physics-Based Generative Model for Data Augmentation and Cross-Modality Data Reconstruction” [Project Link] (Role: PI, Date: 06/2018-06/2020 )

Northeastern Tier 1 Award ($50K)

“Decoding Situational Empathy: A Graph Theoretic Approach towards Introducing a Quantitative Empathy Measure” [Project Link] (Role: PI, Date: 07/2017-09/2018 )

MathWorks Microgrant ($20K)

“Compressive Sensing for In-Shoe Pressure Monitoring” [Project Link] (Role: PI, Date: 06/2017-06/2018)

MathWorks Microgrant ($20K)

“Curriculum Development: Biomedical Sensors and Signals” (Role: PI, Date: 03/2016-06/2017)

NSF SBIR PHASE I-1248587 ($150K)

“Pressure Map Analytics for Ulcer Prevention” (Role: PI, Date: 01/2013-06/2013)

Research Spotlights

No blog post found.